AI Literacy in the Classroom: Teaching Students to Learn With AI, Not Just From It

The classroom of 2026 looks different than it did just a few years ago. Walk into any school today, and you’ll find students with AI assistants…

The classroom of 2026 looks different than it did just a few years ago. Walk into any school today, and you’ll find students with AI assistants at their fingertips, capable of answering questions, writing essays, solving math problems, and generating creative content in seconds. For educators, this presents both an unprecedented challenge and an extraordinary opportunity.

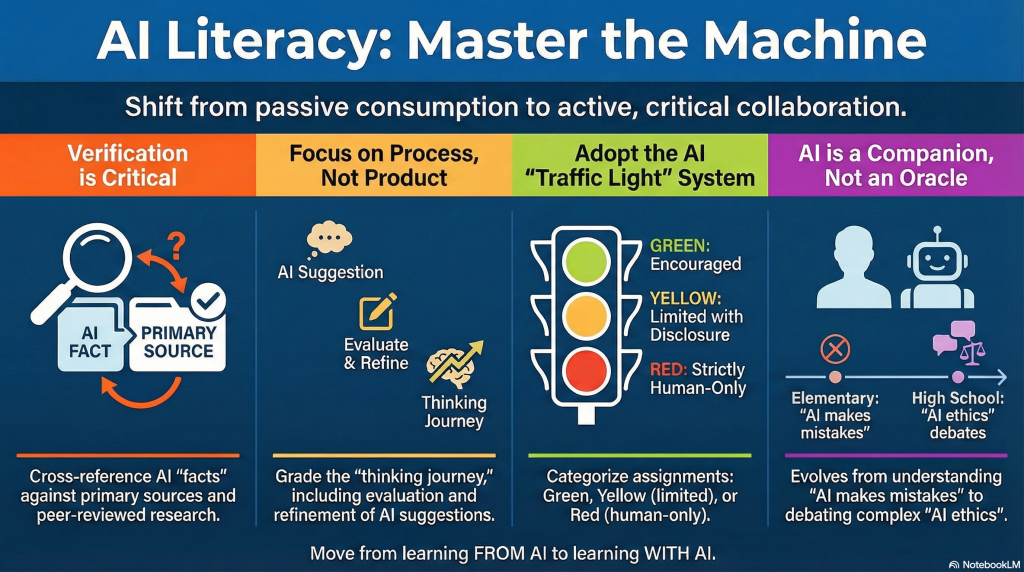

The question is no longer whether students will use AI, but how we can teach them to use it wisely, critically, and ethically. The goal isn’t to ban these tools or pretend they don’t exist. Instead, we need to cultivate a new kind of literacy: one that empowers students to learn with AI rather than simply learning from it.

The New Reality: AI as a Learning Companion

Artificial intelligence has become as commonplace in education as calculators once were, and the debate mirrors the calculator controversy of decades past. Just as math teachers eventually learned to integrate calculators while still teaching fundamental arithmetic, today’s educators are discovering how to incorporate AI tools while preserving critical thinking and deep learning.

The difference is that AI’s reach extends far beyond mathematics. It touches every subject, from literature to history, science to art. This makes the stakes higher and the pedagogical challenge more complex.

Teaching Verification: The Most Critical Skill

Perhaps the most important skill we can teach students in 2026 is healthy skepticism. AI tools are impressive, but they’re not infallible. They can generate plausible-sounding answers that are factually incorrect, perpetuate biases present in their training data, or simply misunderstand nuanced questions.

Educators are developing practical strategies to build verification habits. In history classes, students are being asked to fact-check AI-generated summaries of historical events against primary sources. In science courses, they’re learning to cross-reference AI explanations with peer-reviewed research. In literature classes, they’re comparing AI interpretations of texts with their own close readings.

One particularly effective approach involves what teachers call “AI red teaming.” Students are given prompts designed to expose AI limitations, such as asking for information about very recent events, requesting analysis of complex ethical dilemmas, or posing questions that require understanding of local context. Through these exercises, students learn firsthand where AI excels and where it falls short.

“We’re at the cusp of using AI for probably the biggest positive transformation that education has ever seen.” ~ Sal Khan (Founder of Khan Academy)

Redefining Writing and Research

The traditional five-paragraph essay is being reimagined. When AI can generate a competent essay in seconds, what’s the point of assigning one? Forward-thinking educators are finding the answer lies not in abandoning writing instruction, but in evolving it.

The focus is shifting from final products to processes. Students are being asked to document their thinking journey, showing how they used AI as a brainstorming partner, how they evaluated and refined AI suggestions, and how they integrated their own original analysis and voice. The emphasis is on metacognition: thinking about thinking.

Research projects now often include an “AI transparency statement” where students explain which tools they used, how they used them, and how they verified the information. This mirrors the citation practices that have long been standard in academic work, extending them to a new type of source.

Age-Appropriate AI Literacy

Just as we wouldn’t teach a first-grader and a high school senior the same math concepts, AI literacy needs to be developmentally appropriate.

Elementary School: The focus is on understanding that AI is a tool created by humans, not a magical oracle. Young students learn basic concepts like “AI can make mistakes” and “Always check with a trusted adult.” Activities might include comparing AI-generated stories with human-written ones, or using AI art generators to understand how these tools work.

Middle School: Students begin exploring how AI is trained, what biases mean, and why different AI tools might give different answers to the same question. They learn to evaluate sources more critically and understand the difference between correlation and causation, fact and opinion.

High School: Older students engage with deeper questions about AI ethics, societal impact, and responsible use. They might analyze case studies of AI failures or successes, debate the implications of AI in various professions, and consider how AI might shape their future careers.

Assignments That Work in an AI Age

Creative educators are designing assignments that leverage AI’s capabilities while still requiring genuine student engagement and learning. Here are some examples being used in classrooms today:

A history teacher asks students to use AI to generate an initial timeline of World War II, then fact-check it against their textbook and primary sources, documenting all errors and omissions they find. The assignment becomes as much about historical accuracy as it is about AI literacy.

An English teacher has students use AI to generate three different analyses of a poem, then write an essay arguing which interpretation is most convincing and why, supporting their argument with evidence from the text. Students must engage deeply with the poem to evaluate the AI’s work.

A math teacher allows students to use AI to solve problems, but requires them to explain each step in their own words and identify one part of the solution they found confusing or surprising. This ensures they’re not just copying answers but genuinely understanding the mathematics.

The Ethics Conversation

Perhaps the most important classroom discussions happening in 2026 revolve around ethics. Students are grappling with real questions: When does using AI cross the line into cheating? How much AI assistance is too much? What responsibilities do we have when using tools that might perpetuate biases or spread misinformation?

These aren’t abstract debates. Schools are developing AI use policies, and students are often part of the conversation. Some schools have adopted a “traffic light” system: green for assignments where AI use is encouraged, yellow for assignments where limited AI use is acceptable with disclosure, and red for assignments where AI use is prohibited.

The goal is to help students internalize ethical decision-making rather than simply following rules. When they enter the workforce, there won’t be a teacher telling them when AI use is appropriate. They need to develop that judgment themselves.

Digital Citizenship in the AI Era

AI literacy is ultimately part of a broader digital citizenship. Students need to understand not just how to use AI, but how AI uses them. Discussions about data privacy, algorithmic bias, and the environmental cost of AI computing help students become informed digital citizens.

Many schools are incorporating units on how AI systems work, including the massive datasets they’re trained on and the potential implications for privacy and consent. Students learn that when they use free AI tools, they’re often providing data that trains future versions of those tools.

The Teacher’s Role: Evolving, Not Obsolete

Some feared that AI would make teachers obsolete. Instead, it’s making their role more important than ever. In a world where information is instantly accessible, teachers are becoming less about delivering content and more about guiding students in how to think about that content.

Teachers are curators, helping students navigate the overwhelming amount of information available. They’re coaches, supporting students as they develop critical thinking skills. They’re role models, demonstrating intellectual curiosity and ethical reasoning. And they’re designers, creating learning experiences that are meaningful and engaging in an AI-augmented world.

“AI literacy is not just about understanding the technology; it is about understanding what it means to be human in a world where we have these powerful tools.” – Dr. Rose Luckin (Professor of Learner Centred Design at UCL Knowledge Lab)

Looking Forward

We’re still in the early stages of understanding how to educate in an AI world. Best practices are emerging, but they’re constantly evolving as the technology advances. What’s clear is that ignoring AI or attempting to ban it from schools is not a viable strategy.

The students in our classrooms today will enter a workforce where AI collaboration is standard. Our job is to prepare them not just to use these tools, but to use them thoughtfully, critically, and ethically. We need to help them understand both the remarkable capabilities and the significant limitations of AI.

The goal of education has always been to prepare students for the world they’ll inherit, not the world we grew up in. In 2026, that means embracing AI as a powerful learning tool while ensuring that students develop the human capabilities that AI cannot replicate: creativity, empathy, ethical reasoning, and critical thinking. The classroom of tomorrow isn’t human versus machine. It’s humans and machines, working together, with educators guiding the way.